AI systems are increasingly embedded in decisions that affect people’s work, finances, healthcare, education, and access to critical services. As artificial intelligence and machine learning products become more capable, they also become more complex and harder for users to understand. This growing gap between AI intelligence and human understanding is where trust often begins to break down.

Explainable AI (XAI) was introduced to address this challenge. However, explainability does not exist in isolation. Users never interact with models directly; they interact with interfaces, interactions, language, and design systems. This is where explainable AI UX design plays a defining role.

Without thoughtful UX design for AI products, even technically strong explainability efforts remain invisible, confusing, or ineffective. Trust is not created by algorithms alone; it is built through AI user interface design, interaction patterns, and transparency-focused experiences.

Curious how AI UX transforms opaque systems into human-friendly experiences? Explore explainable AI UX strategies with TheFinch Design.

The Black Box Problem and Why Users Feel Uneasy

One of the most persistent challenges in AI product design is the black box problem. AI systems generate outputs, predictions, or recommendations without clearly communicating how or why those outcomes were produced.

From a technical standpoint, this is often due to the complexity of modern machine learning models, deep learning architectures, or generative AI systems that are inherently difficult to interpret. From a user’s perspective, however, the experience feels like a loss of visibility and control.

A decision appears on the screen, but the reasoning behind it remains hidden.

This lack of clarity creates discomfort and skepticism. Users may question:

- Whether the AI system is accurate

- Whether it is fair or biased

- Whether it is appropriate for their specific situation

Some users accept outcomes blindly because they feel disempowered to challenge them. Others reject AI systems entirely because they do not trust what they cannot understand.

The black box problem is not just a technical limitation; it becomes a UX problem the moment an AI interface presents an outcome without meaningful context.

Feeling uneasy with your AI interface’s lack of transparency? See how expert design can make complex AI understandable and trustworthy.

Why Explainable AI UX Design Is Essential

Explainable AI UX design exists to bridge the gap between complex machine learning behavior and human comprehension. Users do not need access to every internal calculation or model parameter. What they need is relevant, actionable insight that helps them make informed decisions.

This is where UX design for AI products and machine learning product design become critical. User experience directly shapes:

- How users interpret AI behavior

- How much trust they place in automated decisions

- How willing they are to rely on AI systems over time

When explainability is treated as a core UX principle rather than an afterthought, AI systems feel more predictable, less intimidating, and more aligned with human expectations.

Want to bridge the gap between machine decisions and human confidence? Talk to us about explainable AI UX solutions.

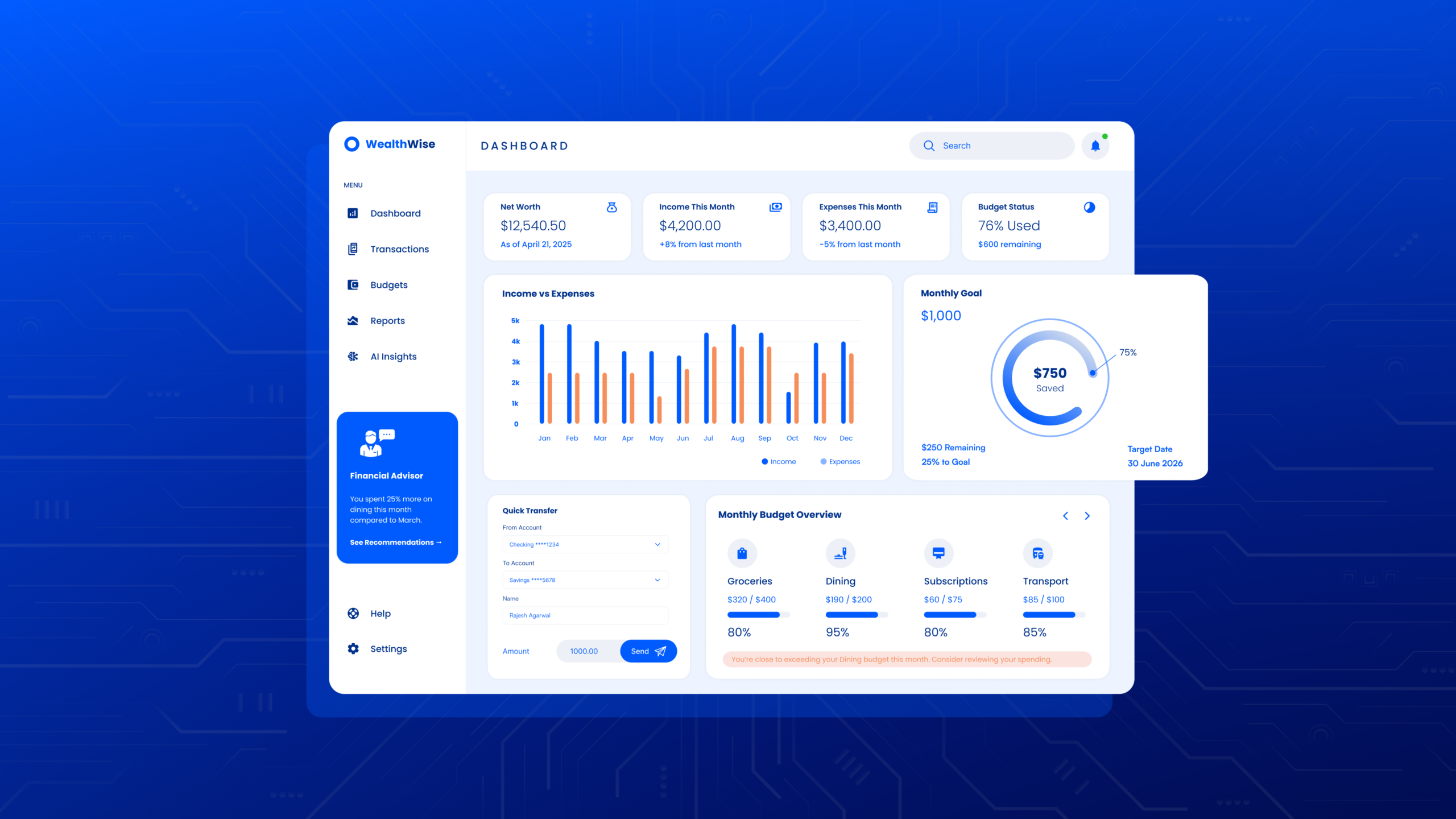

Applying XAI UI Principles in Real AI Products

Effective XAI UI principles prioritize relevance, clarity, and timing. Explanations should appear when users need them, not as constant interruptions that increase cognitive load.

A proven approach used by leading AI UX design agencies is layered explainability, which includes:

- A concise, human-readable explanation shown by default

- Visual indicators that communicate confidence, uncertainty, or influencing factors

- Optional deeper explanations for users who want more technical or contextual detail

This layered structure supports multiple levels of engagement without overwhelming users. It also reduces the black box effect by making AI reasoning visible in manageable steps.

AI interfaces that follow this approach feel informative, respectful, and trustworthy rather than intrusive.

Designing Interpretable Machine Learning Interfaces

Interpretable machine learning interfaces focus on meaning instead of mechanics. Rather than exposing raw technical metrics, probabilities, or model weights, effective AI UX design highlights factors users can easily relate to.

For example, an AI system might explain that a recommendation was influenced by:

- Recent user activity

- Past preferences

- Contextual or environmental data

This approach supports UX design for AI products by translating system behavior into familiar concepts. Users gain insight without needing technical expertise in data science or machine learning.

This translation layer is essential; it is what allows explainability to function at the experience level, not just the technical level.

Transparency That Supports Action

Transparency is often discussed as an ethical requirement, but it is also a usability and interaction design challenge. Too much information can overwhelm users and disrupt workflows. Too little information creates suspicion and disengagement. Responsible AI UX design finds the balance.

High-impact or high-risk decisions require:

- Stronger explanations

- Clear justifications

- Review, appeal, or override options

Low-risk interactions may only need subtle cues or lightweight explanations. Explainable AI UX design recognizes that transparency should enable action, not slow users down.

Want AI transparency that empowers users without overload? See optimized transparency frameworks from us.

Designing Trust in AI Through Ethical UX Principles

Ethics in AI are not abstract concepts; they are experienced directly through interfaces and interactions. Users encounter ethical considerations when:

- They are informed that AI is involved

- They are given choices or alternatives

- They are allowed to question or challenge outcomes

Designing trust in AI means making these moments visible, understandable, and respectful. This is where ethical AI UX principles come into practice.

AI bias mitigation UX is especially important. Interfaces can:

- Signal uncertainty or confidence levels

- Prompt users to review AI-generated results

- Encourage additional human input when confidence is low

These design choices reduce harm even when bias cannot be fully eliminated at the model level.

Looking to build ethical, human-centered AI experiences? Partner with us, The ethical AI UX experts.

Supporting User Understanding of AI Decisions Over Time

User understanding of AI decisions develops gradually. It is shaped by:

- Consistency in explanations

- Repetition across interactions

- Clear feedback loops

When explanation styles change frequently or behave unpredictably, users struggle to form reliable mental models of how the AI system works.

Strong explainable AI UX design treats understanding as an ongoing relationship, not a one-time disclosure. Each interaction reinforces clarity rather than resetting expectations.

This approach is especially critical in AI assistant UI design, generative AI UX design, and machine learning platform UX, where systems evolve continuously.

Responsible AI UX as a Continuous Design Effort

Responsible AI UX is not a feature that can be completed once and forgotten. As AI systems learn, adapt, and expand into new use cases, explanation patterns must evolve alongside them.

Design teams working in machine learning product design need to revisit transparency strategies whenever:

- Models change

- Capabilities expand

- User contexts shift

What felt sufficient during early adoption may feel inadequate later.

Effective UX design for AI products remains adaptable without making the system feel unstable or unpredictable. Responsibility in AI UX is expressed through consistency, openness, and responsiveness to user feedback.

Measuring Trust and Transparency in AI Products

Traditional UX metrics focus on usability, but trust requires additional signals. Indicators such as:

- User feedback

- Hesitation or override behavior

- Support requests and complaints

All reveal how users perceive AI decisions.

Users may continue using an AI system even when accuracy fluctuates, as long as they feel informed, respected, and in control. High performance alone does not guarantee trust if users feel excluded from the reasoning process.

Explainable AI UX design succeeds when users are comfortable relying on AI while still feeling empowered to question it.

Moving Beyond the Black Box With Explainable AI UX

The black box problem will not disappear entirely, especially as AI systems grow more complex. What can change is how that complexity is presented to users.

When AI user interface design actively reduces opacity, AI systems feel more approachable, accountable, and human-centered. Users become better equipped to:

- Understand outcomes

- Identify potential issues

- Participate meaningfully in decision-making

Explainable AI does not eliminate complexity; it makes complexity workable. Through thoughtful UX strategies, ethical design principles, and transparency-driven interfaces, AI products can build lasting trust without overwhelming the people they are built for.

Partner With TheFinch Design, the AI UX Design Agency That Brings Explainable AI to Life

Explainable AI UX design is a strategic imperative for modern digital products. When users interact with machine learning systems, trust and transparency are built not through algorithms alone but through thoughtfully designed interfaces, clear explanations, and human-centered AI experiences.

This is where TheFinch Design stands out as a leading AI UX design agency and machine learning product design partner. With deep expertise in AI user interface design, UX design for AI products, interaction design, usability testing, and UX research, TheFinch transforms abstract explainability concepts into clear, actionable experiences that users understand and trust.

By blending human-centered design principles with AI-led insights and data-driven research, TheFinch Design crafts interfaces that reduce opacity, mitigate bias through intelligent feedback patterns, and empower users with transparent AI decision logic.

Whether you’re building an AI assistant UI, machine learning platform UX, or generative AI system, our services help your product feel intuitive, reliable, and ethically aligned with user expectations.

When you work with us, you’re not just improving aesthetics; you’re building trust, credibility, and long-term user engagement through responsible AI UX design that scales with your product’s complexity and impact.

Ready to elevate your AI experience and create explainable, human-focused AI products? Visit https://thefinch.design/ and start designing AI with trust at its core.

FAQs

Q: What is explainable AI UX design?

A: Explainable AI UX design refers to the practice of designing user interfaces and interactions that help users understand the reasoning and behavior of AI systems. It focuses on clarity, transparency, relevance, and presenting AI decisions in human-meaningful terms so that users can interpret and trust outputs.

Q: Why is UX design essential for AI products?

A: UX design is essential for AI products because it bridges the gap between complex machine learning behavior and human understanding. Good UX ensures users can interpret outcomes, feel confident in decisions, and interact with AI systems effectively and intuitively.

Q: How does explainable AI improve user trust?

A: Explainable AI improves user trust by offering clear, relevant insights into how decisions are made. Effective explainability uses layered explanations, visual indicators of confidence, and human-readable reasoning that reduce the black box effect and make AI behavior understandable.

Q: What services does an AI UX design agency like TheFinch Design provide?

A: An AI UX design agency like TheFinch Design offers expertise in AI user interface design, UX research, interaction design, usability testing, machine learning product design, design systems, prototype development, and strategic guidance, all focused on creating transparent, trustworthy, and human-centered AI experiences.

Q: How can explainable AI UX design benefit machine learning platforms?

A: Explainable AI UX design benefits machine learning platforms by making model behavior interpretable for users, improving clarity in outcomes, enabling proactive feedback loops, and supporting ethical, transparent decision-making workflows that users can understand and trust.