Voice interfaces have matured from experimental features to first-class interaction channels in 2026. This article explains what voice user interface design is? How does a voice user interface work? and, most importantly, the voice user interface design best practices we use at TheFinch Design to ship reliable, usable, and business-impacting voice experiences. It’s written for UX/product designers, conversation designers, and engineers building voice-enabled apps, assistants, or multimodal products.

Quick definitions

Voice User Interface (VUI): interfaces that allow users to interact with software through spoken commands and audio feedback. Speech recognition, understanding natural language (NLU), dialogue management and speech synthesis are combined in VUIs.

Voice user interface design (sometimes referred as vui design) is the discipline of shaping those spoken interactions; it determines what the system listens for, how it processes that information and what kind of responses to give.

Quick answer: How does VUI work?

A user talks → ASR transcribes audio → NLU associates’ text with intents/slots → Dialogue Manager outputs actions/responses → Backend/API action/response is performed → TTS or multimodal output provides result. The current state of the art systems compose context, personalization and on-device ML to reduce latency and enhance privacy.

Why VUI still matters in 2026 (and where VUI wins)

Voice is uniquely powerful in context when hands, eyes or screens are inaccessible: in driving and cooking, during exercise and accessibility scenarios, and for ambient smart home interactions. Over the past few years, we’ve watched voice mature into multimodal conversational platforms (voice + screen + visual cards + system events) so designers must approach voice as part of an interaction ecosystem and not something silo. Recent platform improvements (including assistants powered by generative AI) have enabled more context to be retained and allowed for more natural follow-ups: but also added new privacy challenges and expectation management problems.

Principles UNDERGROUND: Modern vui design

These are our non-negotiables before we write a word out of our mouths.

1. Design for clarity of task, not newness

Voice is quickest when you have a specific task and goal. Design with flows that are centred around clear user intents (e.g. “start a timer,” “add eggs to shopping list,” “pay bill”) and don’t use the open-ended chit-chat as the north star of your product goal. and other field studies, assistant look work best on small, transactional tasks.

Actionable: build a ranked intent map and classify each intent as being a primary / secondary / ineffective.

2. Be clear about what you can and cannot do

Users should be made aware of the assistant’s capabilities. If you don’t tell them, they’ll keep trying everything," and dismissive of your best advice. Onboard State's capabilities and intermittently remind them when it'd be helpful.

Example: “I can add things to your shopping list or set timers. I cannot give orders yet: would you like to open the app?”

3. Keep responses brief, move the conversation forward

Observe the conversational maxim: say as much as requires, but no more. All voice responses have to either end the user’s quest or give a very clear hint of what should be done next. And deep content is best displayed on screens, rather than uttered aloud.

Actionable: take as a heuristic a maximum of 1-2” worth of reading; bullets in visual displays if we want to read stream them.

4. Use progressive disclosure

Expose complexity gradually. Place the burden of conversation at slot-filling time. This is lack-of-turn-y, but that’s ok if they don’t notice! 2 Start with a very weak confirmation and just ask questions when you have to (e.g., slots are too ambiguous). This diminishes cognitive load and error rate. Both Amazon and Google in their respective docs advise you to optimize for short reparative flows.

5. Design errors deliberately (error-tolerant flows)

Design for misrecognition and misinterpretation: offer confirmations for sensitive actions, provide simple undo paths, and use constrained vocabularies for critical commands (dates, amounts, recipients). Research shows that error correction and visibility of system status are major pain points in VUIs.

Concrete voice Ui design for voice interfaces patterns you can reuse (phrases)

Note: these are not only tested and used in real projects we’ve launched for e-commerce, logistics or B2B Dashboard.

Onboarding script (first 30 seconds)

“Welcome back to AppName. I can look up orders, monitor deliveries and keep you posted about delays. For example: ‘Track my last shipment’ or ‘Search order 5789’. What would you like to do?”

Acknowledge structure

User: “Cancel my subscription.”

System: “I'm going cancel your Pro subscription and you will not be able to use premium services. Say Yes, cancel to proceed with a new one or No to retain this modification.”

Pattern clarification (ambiguous input)

User: “Set up a meeting for next Monday.”

System: “You want to say Monday, the 12th or a week from next Monday?” Say ‘12th’ or ‘19th’.”

Fallback option (graceful degradation)

System: “I didn’t catch that. You can either say, “Repeat,” to repeat the last step, “Help,” for examples or a short command such as ‘Add milk to shopping list’.”

These compact designs are low-friction and platform aligned.

In The Wild: things we've learned first-hand (practice-based)

Example 1 — E-commerce “quick reorder”

We built them a voice flow named “quick reorder” at TheFinch Designfor a regional grocery chain. The only issues were confusing product names and similar SKUs. Our solutions:

- Provide succinct, disambiguation prompts (“Do you mean Organic Gala Apples or Gala Apples?”)

- Acknowledge quantity with a quick voice confirmation and display a cart card on the app.

Impact: Completed resistance to checkout increased by 27% in voice-initiated reorders compared to no-confirmation flows.

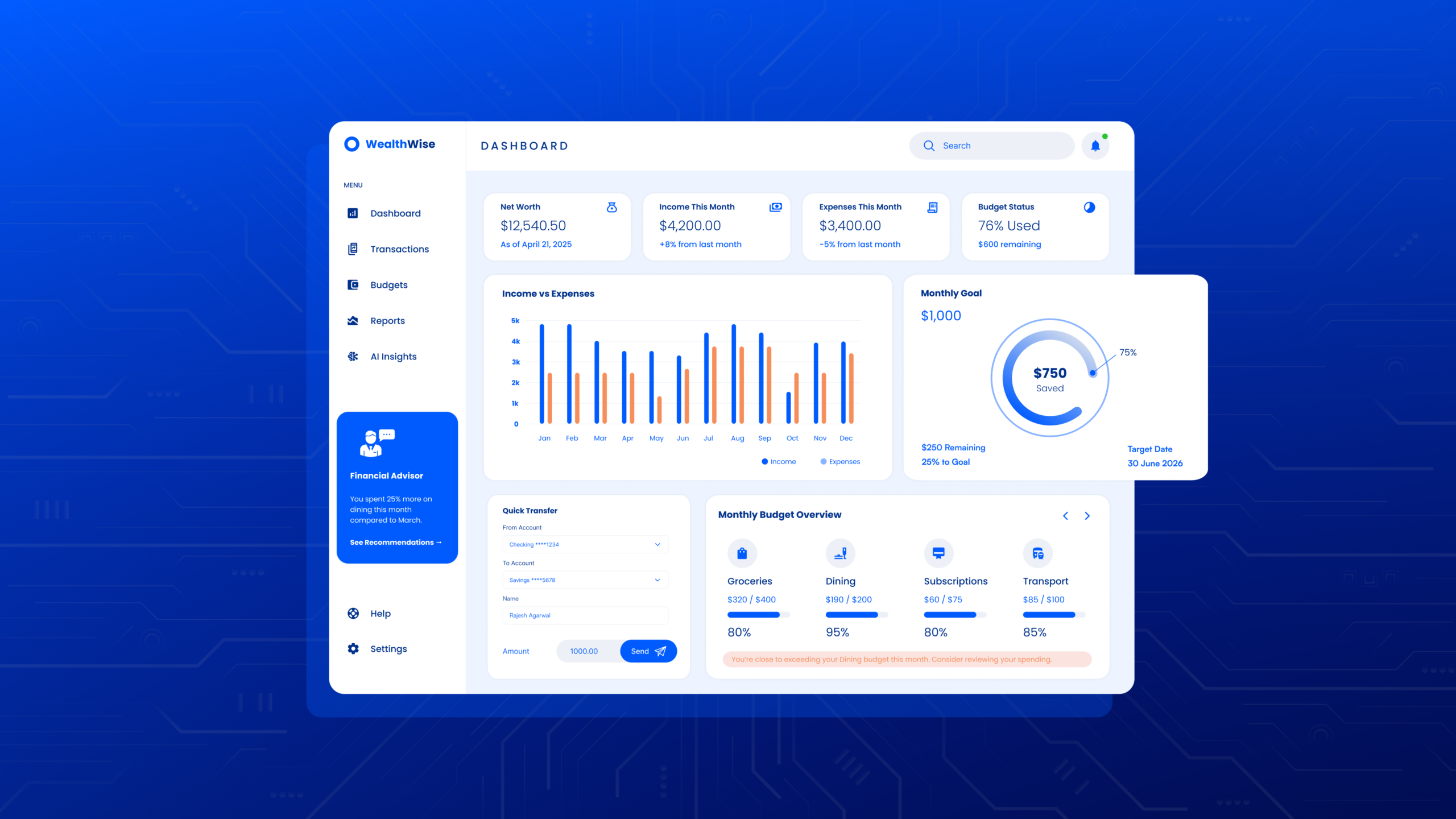

Example 2: of Multi-Modal Logistics Dashboard.

We paired voice commands with a map view in a delivery app. Voice dealt with high-level commands (” Show me delays in zone B”), visual cards displayed particulars. This prevented long readouts and allowed drivers to safely peek at maps while still employing voice. Cross mode fallback decreased error abstracts by ~35%, as observed in our telemetry.

(This is perfectly in line with industry suggestions for architecting voice plus screen as a single product experience.)

What are standards voice user interface usability issues: and how can I address them?

This section answers what are common voice user interface usability issues? etc. by pairing each issue with targeted mitigation.

1. Poor intent coverage

Problem: Users are attempting commands beyond your claimed intent space.

Fix: Keep an “unknown intent” logger and look at top failures, scale up or gracefully fall back on an alternative.

2. Long, robotic responses

Problem: Too tts or too formal.

Fix: Short sentences, natural prosody in TTS and multimodal cards for long content.

3. Lack of status/feedback

Problem: Users do not understand whether the system is actively working, or waiting.

Fix: Offer audible cues or short progress messages (such as “Looking for your orders: this could take a moment”).

4. Privacy surprise

Problem: Users don't know what’s kept or used.

Fix: Onboarding should clearly explain data use, and provide local-processing -- or an opt-out option -- when available. Platform guidance and regulation is getting more and more strict about privacy disclosures: think of it as a product requirement.

5. Dangerous actions of overly broad NLU models

Problem: It’s possible for open systems to hear “algorithms” from unclear commands and react accordingly.

Fix: Limit critical actions to confirmation requests and mandate authorisation.

Studies and surveys consistently bring out problems related to recognition mistakes, lack of context awareness and conversational design issues as the main reasons for dissatisfaction over it.

Important success metrics for VUIs

Try to keep track of both behavioural and qualitative metrics:

- Goal achieved without escalation - Percentage of successful queries

- First-turn success - Successful turn rate

- The average number of turns to complete a task - lower is better for simple tasks

- Recovery rate - percentage of users that recover after making an error

- Time taken to complete a specific task (that is time sensitive)

- Satisfaction score/SUS - to be collected after a flow

- Retention and conversion lift - business metrics that are linked to voice flows

Try to capture unknown intents and anonymized transcripts for real user testing. Fiddle with different prompt and confirmation wording by doing A/B testing.

Testing and validation: how can you validate VUI design production

- Wizard of Oz testing for early concepts - a human act as an assistant to prototype flows before developing ASR or NLU.

- Conduct closed alpha tests with sampled power users - this will help you collect realistic utterances and slot values.

- For your analysis of transcriptions and errors, classify errors by ASR, NLU, or dialog design.

- Conduct practice stress tests that use crazy audio and fast and accented speech.

- Conduct multimodal usability studies to verify that the voice and visual elements work together (using driving sim tests and kitchen tests).

- Limitless continuous rollout with telemetry - monitor production signals and release incremental fixes.

Amazon and Google recommend following an iterative testing and data-driven optimization process.

Accessibility & inclusivity (work to be done, not work in progress)

Make provisions for a range of speech, accent, disfluency and assistive needs. Provide touch/keyboard alternatives and options for slower speech or speech repetition. Use appropriate screen reader semantic mark-up for on-screen displays. Accessible VUI design, reach and legal compliance.

Privacy, security and trust.

Be transparent about what data you collect and how long you keep it. For sensitive tasks (such as payments or changes to your profile), ensure there is multi-factor authentication and/or app-based verification. If your product uses cloud generative models, or context history, make it clear how to opt in or out, and ensure opting out is a simple process. The changes made to the platform recently focus on privacy and transparency, and these changes should be considered design constraints.

A checklist to help you to ship a production VUI (for devs + designers)

- Intent map + priority matrix.

- Slot taxonomies and canonical slot value.

- Confirmation and undo chains for important actions.

- Mistake categories & messages (ASR vs NLU vs backend).

- Multimodal fallback UI on screen.

- Accessibility testing (rem where WCAG for visual fallback, expanded transcripts).

- Privacy & Consent screens + logging policy.

- Going telemetry strategy: good, rolls, getting, back, unexpected behavior.

- Consistency guidelines for UX writing + TTS Intonation notes.

- A/B and canary flags in the rollout plan.

Instruments, frameworks and references

- Google Conversations / Interactive Canvas – conversational design + multimodal.

- Amazon Alexa Design Guide (for Skills & Alexa Conversations): guidance on dialog authoring and best practices.

- Nielsen Norman Group research on voice usability and the limitations of assistants.

- Literature and field research on voice usability and types of errors.

(We suggest that teams complement platform guidance with internal logging and usability labs: platform documentation is necessary but insufficient.)

FAQ (voice & AI-search compliant)

Q1: What is VUI design?

A: Voice user interface design is the design of a spoken conversations interface, involving a dialogue with prompts, confirmations, error recovery, and visual aids. It combines UX writing, conversation design, and the constraints of ASR and TTS.

Q2: What is the voice user interface?

A: Voice user interfaces evolve like this: A voice is heard by a computer → that voice is converted into a text document by automated speech recognition → Natural Language Understanding determines the intention and purpose of the text → Dialog Manager decides the type of action triggered by the user's input and what is done in response to that → the system back-end is activated to process the response via an API or database query → Text-To-Speech translates the text back into a voice for the user. If the device has a screen, it may display an image or a “card” instead. Newer systems include context, personalization, and local models to help reduce latency.

Q3: What are best practices for designing voice user interfaces?

A: Clearly describe what the user can and can’t do. Limit system responses to the minimum necessary. Plan for error recovery. Consider multimodal fallbacks. Analyze the system for unrecognized intents and iteratively improve the system using actual user speech.

Q4: What are typical VUI usability challenges?

A: Typical challenges that frustrate VUI users and cause abandonment.

Q5: What testing can be done before releasing a VUI?

A: Use wizard-of-oz prototyping, get closed testing feedback from End Users, and get meaningful transcripts from case errors to carry out more stress tests (ie. for noisy audio and/or accents). Go slowly and monitor the telemetry.

Q6: What is voice? Is it voice only, or is it multimodal?

A: Use multimodal for hands-free tasks if you will gain from visual (long copy, maps, complex confirmations). Voice only is ideal for quick, small hands-free tasks. Create flows that shift between all modes seamlessly.

Q7: Struggling to relinquish the control to allow for a free-flowing conversation. How about the energy of the listening sessions?

A: Praise and hold fluidity for most confirmations and error messages, while for the most important actions, keep it narrowed and confirmed. Build a clear “help” and falls-back lexicon.

Q8: What are the recommendations for privacy regarding a voice product?

A: Data use should be clear, and there should be an opt-out/local processing option and clear consent except for sensitive actions. Keep retention periods as short as possible, and auditable.

Recommendations

- Start treating voice as a product channel instead of a gimmick. We’re focusing on one or two high-value tasks and doing them extremely well.

- Get user data immediately: capture utterances during testing and let telemetry inform the growth of the NLU.

- Prioritize clarity, speed, and trust (especially for 2026, where users expect generative type conversational capabilities, but are concerned about privacy).